Artificial intelligence is changing how healthcare professionals document care, communicate with patients, and manage administrative workflows. Anthropic's Claude, one of the most capable generative AI models available, is gaining traction among clinicians, therapists, and healthcare administrators for tasks like drafting SOAP notes, summarizing patient records, and composing referral letters.

But healthcare data carries some of the strictest regulatory protections in the United States. Protected Health Information (PHI) is governed by HIPAA, and providers face fines, sanctions, and reputational harm if they mishandle it. That raises a critical question for any healthcare professional considering Claude:

Is Claude HIPAA compliant?

The short answer: Claude is not HIPAA compliant by default.

Anthropic offers a limited path to HIPAA-ready use through its Enterprise product, but only under strict conditions, with significant cost and configuration requirements. For most healthcare organizations, whether solo practices or large health systems, using standard Claude plans with patient data creates serious compliance risk.

This article breaks down the compliance landscape for Claude in healthcare, outlines the risks, explains what Anthropic does and does not cover, and presents alternatives designed specifically for clinical environments.

Claude's Standard Plans: Understanding the Compliance Risks

Anthropic offers several plans intended for individual or team use: Free, Pro ($20/month), Max ($100 to $200/month), and Team ($20/seat/month with a five-seat minimum). These plans give users access to powerful AI capabilities, but none of them include a BAA, and none are appropriate for use with PHI.

Here is why:

1. No BAA on consumer or standard plans

Anthropic's own privacy documentation states clearly that its Business Associate Agreement (BAA) "does not cover Workbench and Console, Claude Free, Pro, Max, or Team plans, and other beta or chat products, features, or integrations". Without a BAA, any use of PHI with these plans is a HIPAA violation by definition.

2. Data may be used for model training

In September 2025, Anthropic introduced an opt-in toggle allowing consumer plan users (Free, Pro, Max) to share their conversations for AI model training. Users who opt in are subject to a data retention period of up to five years, a dramatic increase from the previous 30-day default. Even users who opt out still have data retained for up to 30 days on backend systems.

3. No healthcare-specific safeguards

Claude's standard plans are general-purpose AI tools. They lack the infrastructure, access controls, audit logging, and data isolation that HIPAA's Security Rule requires when handling electronic PHI.

4. Risk of accidental PHI exposure

Staff may assume that paying for a Pro, Teams, or Max subscription makes Claude "safe" for clinical use. A therapist drafting session notes, a physician summarizing a patient encounter, or a billing coordinator reviewing a claim could inadvertently expose PHI without realizing the compliance implications.

Given these factors, Claude's consumer and standard plans should not be used for any task involving identifiable patient information.

Claude's Data Training Policy: A Closer Look

Anthropic's consumer privacy policy deserves special attention from healthcare professionals.

Under Anthropic's current Consumer Terms, users of Claude Free, Pro, Teams, and Max can opt in to allow their conversations to be used for model training. Users who opt in are subject to a data retention period of up to five years, far longer than the 30-day default. Users who opt out continue with the 30-day retention window, though data may still be held on backend systems for that period.

The concern for healthcare is twofold:

- Individual employees may opt in without organizational awareness. A clinician using a personal Claude account might enable the training toggle without understanding that their conversation data, potentially including PHI, could be retained for years in Anthropic's training pipeline. This creates a compliance gap that is difficult to detect and monitor.

- De-identification does not eliminate risk. HIPAA's Safe Harbor method for de-identification requires removal of 18 specific identifiers. Anthropic states it uses "a combination of tools and automated processes to filter or obfuscate sensitive data," but this is not equivalent to a formal HIPAA de-identification process. Healthcare organizations cannot rely on Anthropic's internal processes to meet their own compliance obligations.

These data training policies do not apply to commercial products (Claude for Work, Enterprise), but the risk lies in the gap between what an organization intends and what individual users actually do.

Can Claude Be Used in a HIPAA-Compliant Way?

Anthropic does offer a pathway toward HIPAA-ready use, but it comes with significant barriers for most healthcare organizations.

The HIPAA-ready offering requires Enterprise

Anthropic's HIPAA-ready plan is only available through its sales-assisted Enterprise tier, which requires a minimum of 50 seats, with per-seat fees reportedly starting around $60, and all usage billed separately at API rates. At minimum, HIPAA-compliant Claude costs an estimated $36,000+ annually before usage fees. Organizations must contact Anthropic's sales team, undergo a review process, and approval is not guaranteed. For solo practitioners, small group practices, and mid-size clinics, the combination of sales-gated access, minimum seat requirements, and variable usage costs puts this option out of reach.

Some features are excluded from compliance coverage

Even on the HIPAA-ready plan, certain features remain outside the scope of the BAA. Anthropic's documentation notes that Claude Code bundled seats are not currently covered, and newer features such as Cowork are not yet available on HIPAA-ready Enterprise plans. Organizations must understand which features are and are not covered, monitor changes as Anthropic releases updates, and train staff accordingly.

Configuration and monitoring fall on the organization

Signing up for an Enterprise plan does not make Claude compliant by itself. Administrators must configure access controls, disable risky features, maintain audit logs, and monitor usage on an ongoing basis. As Anthropic releases new features, each one must be evaluated for compliance impact before it is enabled.

Cloud alternatives add more complexity

Claude is also available through AWS Bedrock, Google Cloud Vertex AI, and Microsoft Azure, each with their own BAAs covering the infrastructure layer. These paths are technically viable but require cloud engineering expertise, add infrastructure costs, and place full configuration responsibility on the organization.

Practical Challenges of Using Claude in Healthcare

Even for organizations that secure a HIPAA-ready Enterprise plan, day-to-day operational challenges remain.

User confusion

Clinicians may not understand why they can use Claude on their work computer but not on a personal device, or why certain features are unavailable. This creates friction and increases the risk of accidental violations.

Content filtering gaps

General-purpose AI platforms sometimes block discussions of sensitive clinical topics like trauma, abuse, substance use, or anatomical details. Healthcare professionals need an AI tool that can handle these subjects without refusal or content warnings that disrupt clinical workflows.

Accuracy risks

AI-generated clinical content must be reviewed by qualified professionals. Outputs involving medication dosages, diagnostic criteria, or treatment protocols require careful verification.

Example: A behavioral health practice signs up for Claude's Enterprise plan and configures it for HIPAA-ready use. A new therapist joins the team and, not understanding the distinction, begins using Claude Pro on a personal account to draft therapy notes. Because the personal account has no BAA, every session note entered constitutes a potential HIPAA violation, even though the organization's Enterprise account is properly configured.

BastionGPT: A Healthcare-First Alternative

For organizations that want access to advanced AI without the compliance burden that Claude's HIPAA-ready pathway requires, BastionGPT is a purpose-built solution designed from the ground up for healthcare.

Automatic BAA on all plans. Every BastionGPT subscription includes a HIPAA BAA in the terms of use. There is no sales review, no approval process, and no minimum user requirement. A solo practitioner on the $20/month Professional plan receives the same BAA coverage as a 500-person health system.

Compliance by design. BastionGPT was developed by healthcare and cybersecurity professionals specifically for HIPAA, PIPEDA, and APP compliance. The platform assumes all input may contain regulated data and treats it accordingly.

Proven compliance track record. BastionGPT has offered HIPAA-compliant AI with a BAA since April 2023. Anthropic's HIPAA-ready Enterprise offering launched in late 2025.

Data is never used for training. User prompts, documents, and AI outputs are never shared with underlying AI providers for training or any other purpose. There is no opt-in toggle, no 5-year retention pipeline, and no ambiguity. Data is securely wiped after 30 days by default, and users can delete sooner.

Multi-model access with built-in security. BastionGPT integrates multiple leading AI models within a single secure interface configured for medical use cases, giving users the power of advanced generative AI without managing separate vendor relationships or compliance configurations.

Healthcare-specific workflows and AI Scribe. BastionGPT includes tools built for clinical documentation (SOAP, DAP, BIRP notes, treatment plans, referral letters, prior authorizations, and more), a Saved Prompts library for standardizing workflows, and HIPAA-compliant audio transcription with multi-speaker recognition.

Healthcare-appropriate content filtering. BastionGPT allows discussions of sensitive clinical topics (trauma, abuse, substance use, anatomical details) that standard AI platforms may block, a critical capability for mental health professionals and clinicians working with complex cases.

Accessible pricing. Professional plans start at $20/user/month. Professional Plus is $45/user/month. All plans include a 7-day free trial with no credit card required and a 45-day satisfaction guarantee.

Alexis Arceo, CEO, Expedited Reports: "The HIPAA compliance is a huge time saver because I do not have to take out identifying information."

David Lopis, Director, Psychology Squared: "BastionGPT has a lot more capabilities with data protection, so clinicians can more freely use AI."

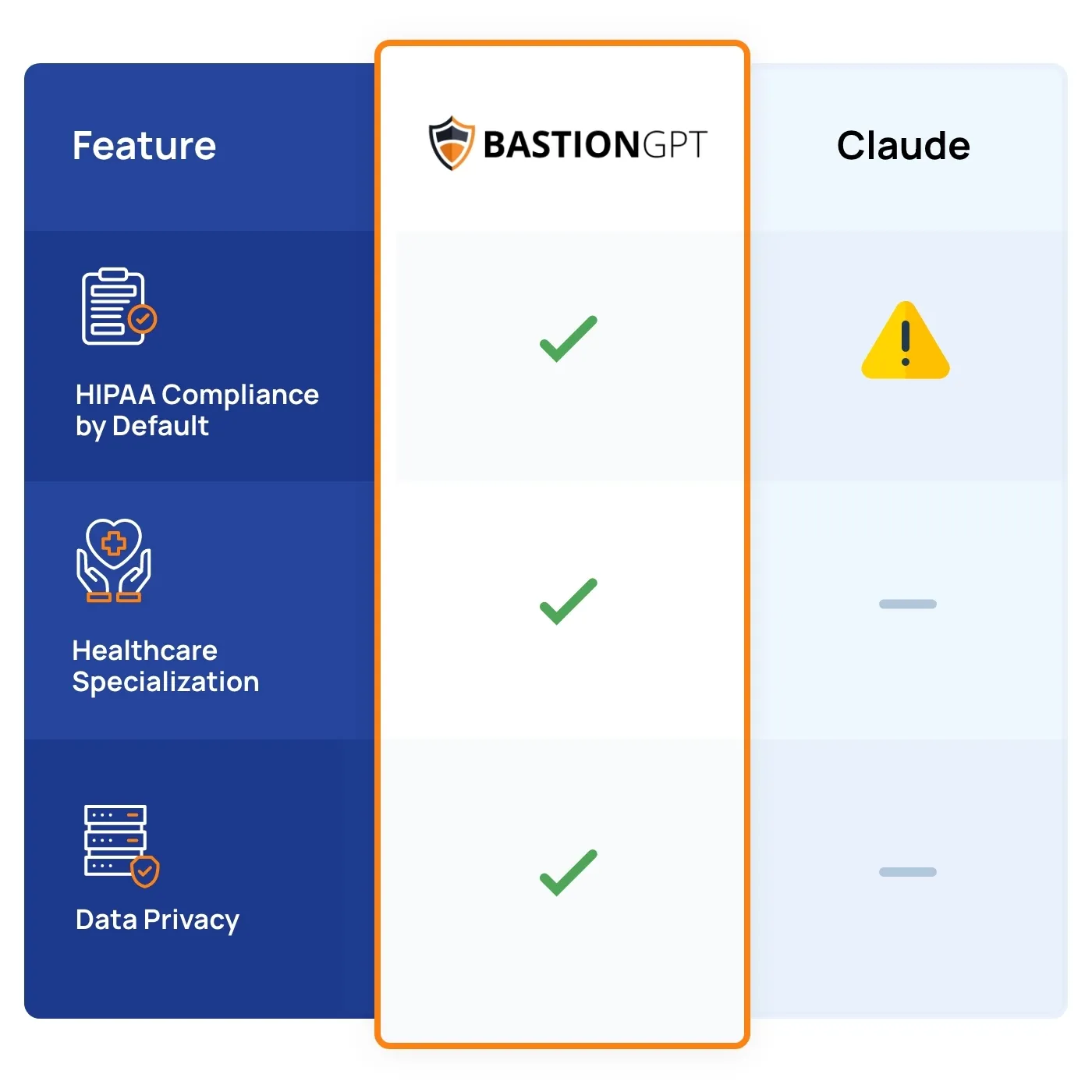

Comparison: BastionGPT vs. Claude

Conclusion

Claude is a capable AI assistant, but it is not HIPAA compliant by default. Standard plans (Free, Pro, Max, Team) should not be used with PHI. Anthropic's HIPAA-ready Enterprise offering requires a sales process, a 20-seat minimum, annual contracts, and ongoing configuration and monitoring by the healthcare organization.

Most users can sign up and start using BastionGPT in as little as 10 minutes. There are no setup costs, a 7-day free trial, and no fixed commitments. Whether you need secure transcription, support drafting SOAP notes and treatment plans, or help with prior authorizations and insurance appeals, BastionGPT is designed to deliver fast, reliable, HIPAA-compliant results.

Begin your journey with a 7-day free trial of BastionGPT.

If you have questions or want to connect:

Email: [email protected]

Phone: +1 (214) 619-8696

Schedule a Chat: Book a Meeting

Frequently Asked Questions (FAQ)

Is Claude HIPAA compliant?

Claude is not HIPAA compliant by default. Anthropic's BAA does not cover Claude Free, Pro, Max, or Team plans. HIPAA-ready use is only available through Anthropic's sales-assisted Enterprise plan (20+ seat minimum) after a review process with no guaranteed approval.

Does Anthropic sign a BAA for Claude?

Anthropic may provide a BAA for its sales-assisted Enterprise customers after reviewing compliance requirements and the specific use case. The BAA is not available for consumer plans, and approval is not automatic.

Can therapists use Claude for session notes?

Therapists and mental health professionals should not use Claude's consumer plans (Free, Pro, Max) for session notes or any documentation containing PHI. These plans lack a BAA, and data entered could be retained or used to improve AI models if training is enabled. HIPAA-compliant alternatives like BastionGPT are designed specifically for this use case.

What happens if I use Claude with patient data on a standard plan?

Using PHI with any Claude plan that lacks a BAA constitutes a potential HIPAA violation. This can result in regulatory penalties, breach notification requirements, and reputational damage. HIPAA fines range from thousands to millions of dollars depending on the severity and nature of the violation.

Does Claude use my data for AI training?

On consumer plans (Free, Pro, Teams, Max), Anthropic offers an opt-in setting that allows conversations to be used for model training, with data retained for up to five years. Users who opt out retain the 30-day default retention. Enterprise plans are excluded from this policy. Deleted conversations are not used for training under any plan.

Is there a HIPAA-compliant alternative to Claude for healthcare?

BastionGPT is designed specifically for healthcare, with HIPAA compliance built into every plan. It includes an automatic BAA, HIPAA-compliant transcription, clinical documentation templates, and access to multiple leading AI models within a secure environment. Plans start at $20/user/month with a 7-day free trial.

Can I use Claude through AWS or Google Cloud for HIPAA compliance?

Claude is available through AWS Bedrock, Google Cloud Vertex AI, and Microsoft Azure, each of which offers HIPAA-eligible infrastructure. This creates a technically viable path, but the organization bears full responsibility for compliant configuration, access controls, audit logging, and ongoing monitoring. This approach requires technical expertise and adds infrastructure costs.

What are the risks of using Claude with PHI?

PHI exposure through non-compliant plans, unauthorized data retention, potential use of data for AI model training, regulatory fines, breach notification requirements, and erosion of patient trust.

Disclaimer: This article provides general information about HIPAA compliance and AI tools based on publicly available information as of April 2026. It does not constitute legal advice. Healthcare organizations should consult with qualified legal counsel and compliance experts to confirm their specific use of any technology meets HIPAA requirements and other applicable regulations. AI provider policies and features are subject to change.

Sources

Plans & Pricing, Anthropic: https://claude.com/pricing

Business Associate Agreements for Commercial Customers, Anthropic Privacy Center: https://privacy.claude.com/en/articles/8114513-business-associate-agreements-baa-for-commercial-customers

HIPAA-ready Enterprise plans, Anthropic Help Center: https://support.claude.com/en/articles/13296973-hipaa-ready-enterprise-plans

Updates to Consumer Terms and Privacy Policy, Anthropic: https://www.anthropic.com/news/updates-to-our-consumer-terms